TF-IDF method belongs to domain of information retrieval,

Unlocking the Power of TF-IDF with Python

TF – IDF (Term Frequency – Inverse Document Frequency)

Hello, future data scientists and coding aficionados! Today, we’re venturing into the realm of text analysis with a focus on one of its most powerful tools: TF-IDF. Standing for Term Frequency-Inverse Document Frequency, TF-IDF is a statistical measure that evaluates the importance of a word within a document relative to a collection of documents, known as a corpus. This technique is not only fascinating but also pivotal in various applications, including information retrieval, keyword extraction, and document classification.

The TF-IDF is employed in the field of information retrieval, which entails a number of statistical techniques to translate text into a quantitative vector of fractals.

Text mining and user modelling are accomplished using these techniques.

This is a statistical method to extract information from documents. It uses human language to retrieve information. It works by retrieving the relevancy of a term in a corpus.

It’s obviously hard for a computer to extract information from a human-readable document, so TF-IDF converts it to numerical format.

In TF-IDF, words are measured with their frequency in sentences and number of sentences they are used in.

We need a corpus to apply TF-IDF to, so we will be using the following corpus in our code. (corpus is a collection of text information, it might or might not be structured according to use case. )

from google.colab import drive

drive.mount('/content/drive')

with open('/content/drive/MyDrive/Python Course/NLP/TFIDF/corpustfidf.txt','r', encoding='utf8',errors='ignore') as file:

study = file.read().lower()

print(study)

python is a high-level, general-purpose programming language.

its design philosophy emphasizes code readability with the use of significant indentation.

we offer high end assistance for your data science needs.

we offer courses in data science, business intelligence, marketing analytics and website analytics

In business intelligence course we offer an insight to creation of dashboards data handling through bigquery's.

you will be instructed by a experinced data scientist with extracting,managing and moulding data to your needs.

market analysis course will be taught in these lines, how to use surveys, interviews, focus groups, and customer observation to benefit the business.

market analysis is very important field for new businesses to gain a footing and old ones to grow.Term frequency

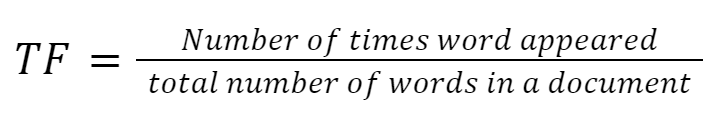

TF is a unit for counting a word’s frequency in a document compared to all other words in the document. The number of times the word appears in a document is divided by the total number of words. This will give us how common the word is in a document.

Before we move, we need to remove stop words such as is, was, to, from, in, etc. To stop these words from overshadowing other words, we need to remove them before calculations.

Tokenization:

In the following code, we will document words into lists of sentences and sentences into lists of words.

tokens=[[y for y in x.split(' ')] for x in study.split('\n') if x!='']

tokens[0]['python',

'is',

'a',

'high-level,',

'general-purpose',

'programming',

'language.']Remove Stopwords

Remove stop words from tokens using nltk library’s stop words list.

# import libraries

import re

# function to remove stopwords and special characters

def process(word):

# check for empy strings

if word!='':

# remove stopwords

if word.lower() not in stop:

# remove special characters from tokens

return re.sub('[^a-zA-Z0-9]+', '', word)

tokens=[[process(y) for y in x.split(' ') if process(y)!=None] for x in study.split('\n') if x!='']

tokens

uniques = set([y for x in tokens for y in x])calculate term frequency

In the following code, we will calculate the term frequency of each word according to its sentence. this process can be called TF vectorizer

def tfc(word,sen_list):

return sen_list.count(word)/len(sen_list)

#tf=[{y:tfc(y,x[1]) for y in x[1]} for x in zip(tf,tokens)]

tf=[{y:tfc(y,x) for y in x} for x in tokens]

tf[0]

[{'python': 0.2,

'highlevel': 0.2,

'generalpurpose': 0.2,

'programming': 0.2,

'language': 0.2},

{'design': 0.125,

'philosophy': 0.125,

'emphasizes': 0.125,

'code': 0.125,

'readability': 0.125,

'use': 0.125,

'significant': 0.125,

'indentation': 0.125}]Inverse Document Frequency

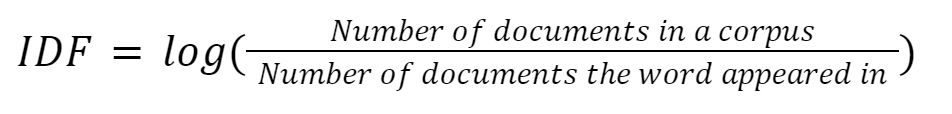

While TF works on the frequency of common words in documents. It doesn’t account for rare but important words in a document. The IDF works to raise the value of uncommon words.

IDF is used as a normalizer to reduce the value of common words while increasing the value of rare words.

Calculate IDF

In the following code we will count number of appearances of a word in sentences and divide it from number of documents in corpus and take the log of answer.

this process can be called IDF vectorizer.

import math

def idfc(uniques,tokens,len_doc):

x={}

for i in uniques:

counter=0

for j in tokens:

if i in j:

counter+=1

x[i]=math.log10(len_doc/counter)

return x

idf=idfc(uniques,tokens,len_doc)

idf{'2007': 1.4313637641589874,

'2000': 1.4313637641589874,

'focus': 1.130333768495006,

'numerical': 1.4313637641589874,

'related': 1.4313637641589874,

'garbagecollected': 1.4313637641589874,

'usage': 1.130333768495006,

'become': 1.4313637641589874,

'30': 1.4313637641589874,

'python': 0.43136376415898736,

'paradigms': 1.4313637641589874,

'created': 1.4313637641589874,

'creator': 0.9542425094393249,

'would': 1.4313637641589874}TF-IDF

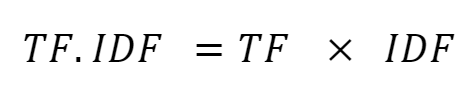

The TF-IDF value of a term is calculated by multiplying the TF and IDF values for a specific word. Which gives us the actual importance of a term. Important words have a higher TF-IDF value.

In following equation, we are concluding the calculation of TF-IDF values

Calculate TF-IDF

Multiply IDF vector values into TF’s matching words.

tf_idf=[{y:x[y]*idf[y] for y in idf if x.get(y)} for x in tf]

tf_idf[0]{'python': 0.08627275283179747,

'highlevel': 0.22606675369900123,

'generalpurpose': 0.2862727528317975,

'programming': 0.1464787519645937,

'language': 0.1464787519645937}TF-IDF can be used in cases of information retrieval, text summarization, keyword extraction, vectors, and word embeddings.

Other corpus vectorization methods

TF-IDF vs. Word2Vec vs. Bag-of-Words vs. BERT.

Bag-Of-Words

Basically, a bag of words is a TF of all words in a corpus. In a bag of words, we count the frequency of a word in a sentence throughout the whole corpus. It lacks the IDF normalisation of TF-IDF.

Word2Vec

Word 2 Vec describes its function through the name, its word vectorizer. word2vec employs 2 layer neural networks for corpus input. word2vec is context-aware of a word in a corpus. Whereas TF-IDF is not context-aware of a word nor does it understand the semantic meaning.

BERT (Bidirectional Encoder Representations From Transformers)

Whereas word2vec only 2 layers and uses a neural network. BERT goes one step further and uses a deep neural network to create corpus vectors.

ANCOVA: Analysis of Covariance with python

ANCOVA is an extension of ANOVA (Analysis of Variance) that combines blocks of regression analysis and ANOVA. Which makes it Analysis of Covariance.

Learn Python The Fun Way

What if we learn topics in a desirable way!! What if we learn to write Python codes from gamers data !!

Meet the most efficient and intelligent AI assistant : NotebookLM

Start using NotebookLM today and embark on a smarter, more efficient learning journey!

Break the ice

This can be a super guide for you to start and excel in your data science career.

Model Context Protocol (MCP) — the “USB” for AI tools

MCP is the USB port for AI — A standard that lets models like ChatGPT safely connect to tools and servic

Manova Quiz

Solve this quiz for testing Manova Basics

Quiz on Group By

Test your knowledge on pandas groupby with this quiz

Visualization Quiz

Observe the dataset and try to solve the Visualization quiz on it

Versions of ANCOVA (Analysis Of Covariance) with python

To perform ANCOVA (Analysis of Covariance) with a dataset that includes multiple types of variables, you’ll need to ensure your dependent variable is continuous, and you can include categorical variables as factors. Below is an example using the statsmodels library in Python: Mock Dataset Let’s create a dataset with a mix of variable types: Performing…

Python Variables

How useful was this post? Click on a star to rate it! Submit Rating

A/B Testing Quiz

Complete the code by dragging and dropping the correct functions

Python Functions

Python functions are a vital concept in programming which enables you to group and define a collection of instructions. This makes your code more organized, modular, and easier to understand and maintain. Defining a Function: In Python, you can define a function via the def keyword, followed by the function name, any parameters wrapped in parentheses,…

Python Indexing: A Guide for Data Science Beginners

Mastering indexing will significantly boost your data manipulation and analysis skills, a crucial step in your data science journey.